In this tutorial I will run you through some very simple steps on creating a rollover button by using Photoshop, CSS, and HTML.

In a previous tutorial I showed you how to create a social networking sticker icon, so I am going to use that in this tutorial.

For this tutorial you will need photoshop or equivalent and also an html software such as Dreamweaver, which is what I will be using.

The first step is to open up your image and save two images. The first image will be the sticker and the second image will be a sticker that has been peeled back.

To make this easier here is the .psd file for this tutorial.

Open up the .psd file and you will see layers in your palette.

And this on your canvas:

You can see the fold layer is showing on top of the sticker so you can hide that layer by clicking the little eye icon beside the layer in your palette window.

As you can see there is still a layer showing that does not need to be for our first image so hide the layer underneath the group “twitter”

After that you should have the first sticker image ready for saving. I am going to save my images as .png files so they can be transparent on any background color. To save an image as a .png file hide your background layer so there is no white background behind the sticker.

Once you hide your background layer you should see little blocks behind the sticker:

Now go to file>>save for web… or use the keyboard shortcut Alt+Shift+Ctrl+S

When you use that featured it will open a window where you can choose what file type you want to save the image as.

Go to the right side and in the drop down choose png-24 …

Save all of these files in the same folder because this will make the tutorial easier later.

So I will save mine as rollover1.png

For the 2nd image unhide the two layers we previously hid and hide the other layers except for the “twitter” group.

Now I will save this file as rollover2.png

That is all we need to do in photoshop, now I will move on to my HTML editing software dreamweaver mx.

I will hit ctrl+n in dreamweaver to start a new document and pick a basic page as html.

Then I will go ahead and save my html file in the same folder as my images called rolloverbutton-tutorial.html

One thing I really like about dreamweaver is how the screen is split into a design view and a code view. At the top is the code view and here is what it contains as default.

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Untitled Document</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<body> </body>

Next between the “body” tags in my code view I am going to write “Twitter”…

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Untitled Document</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<body> Twitter </body>

I want the Twitter text to have a clickable link over it so in my html program I will highlight the text in design view

Now in the properties of at the bottom of my window I will type in http://www.twitter.com

Now you can see the link is clickable in the design view and also an “a href” tag was added around the text in the code view.

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Untitled Document</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<body> <a href="http://www.twitter.com">Twitter</a> </body>

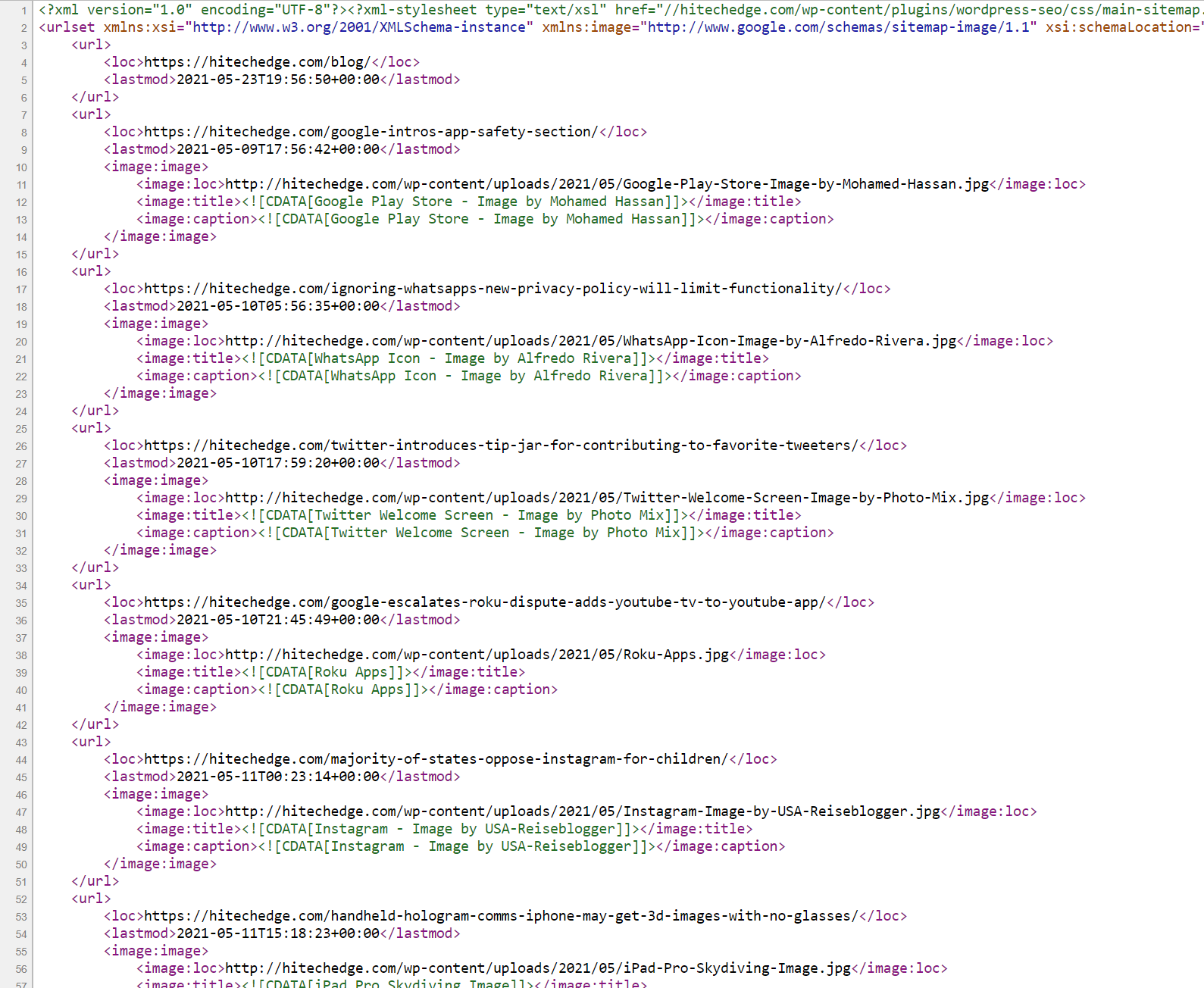

Next I will start creating the stylesheet needed for the rollover button. You can do an external stylesheet or one directly in the html. I will show you the one directly in the html since this is a beginner css tutorial.

Under your </head> tag write <style type=”text/css”> and when you do that in dreamweaver a closing tag will automatically generate </style>

After doing that I currently have this in my code now

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Untitled Document</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<style type="text/css">

</style>

<body> <a href="http://www.twitter.com">Twitter</a> </body>

The coding for the rollover will be very simple. I want to add a div tag around the text so I can control it with CSS styles. So you need to decide what you want to call the div that will surround the “Twitter” text. I will call mine “rollovericons”.

So above your twitter text write <div class=”rollovericons”> .. The class is telling the html which css style to use.

Yet again dreamweaver will create a closing tag automatically and put it after the the div just made. So put the closing div under the twitter text a href tag.

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Untitled Document</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<style type="text/css">

</style>

<body>

<div class="rollovericons"> <a href="http://www.twitter.com">Twitter</a> </div>

</body>

Now its time to define some styles to use in the html. In the next code view I will show you all the css I created for this document and explain it from there. I will put little comments in the actual code view.

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Untitled Document</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<style type="text/css">

.rollovericons a#twitter{ /* This will be the main css that defines what the link on the Twitter text will do since it has an a href tag around it. the #twitter is an ID that will be placed in the a href tag */ display: block; /* this tells the a href link to display as an area so the image will show up correctly. */ width:193px; /* defniing the width of the icon image */ height:192px; /* defniing the height of the icon image */ background: url(rollover1.png) no-repeat; /* this tells the path to display for the image */ } .rollovericons a:hover#twitter{ /* this will define what happens to the a href link when when you roll over it with the mouse */ background: url(rollover2.png) no-repeat; /* this tells the path to display for the image */ } .rollovericons a span{ /* this will be another tag placed around the twitter text a href tag to make the font disapear */ display: none; }

</style>

<body>

<div class="rollovericons"> <a href="http://www.twitter.com">Twitter</a> </div>

</body>

Here is what I will add to the twitter text, <a href=”http://www.twitter.com” id=”twitter” title=”Join Us On Twitter!”><span>Twitter</span></a>Now that you defined your CSS you just need to add a few things in the twitter text a href to get it to display the icon correctly.

The id=”twitter” is the part of the css that has #twitter and the span added will make the font disapear. Also the title that says “Join Us On Twitter!” will pop up when highlighting over the button.

Here is the final working code:

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN">

<html>

<head>

<title>Rollover Icon Tutorial</title>

<meta http-equiv="Content-Type" content="text/html; charset=iso-8859-1"> </head>

<style type="text/css">

.rollovericons a#twitter{ display: block; width:193px; height:192px; background: url(rollover1.png) no-repeat; } .rollovericons a:hover#twitter{ background: url(rollover2.png) no-repeat; } .rollovericons a span{ display: none; }

</style>

<body>

<div class="rollovericons"> <a href="http://www.twitter.com" id="twitter" title="Join Us On Twitter!"><span>Twitter</span></a> </div>

</body>

Now if I preview my html I will see thisAs long as you save your html document and images in the same folder the images should show up fine.

And if I roll my mouse over it this will happen

Test the button out for yourself here

I went an extra step to show you how you can make 3 types of social icons do the same thing and you can view the code to see the CSS involved.

Check out the more complex version!

Thanks for reading, I hope you learned something from this!